Digitizing archived paintings

- Job: University Project

- Date : Feb 2016 - May 2016

- Technologies: Python, Anaconda, OpenCV, Image Processing, Layout analysis, Optical Character Recognition

- Code: https://github.com/GrimReaperSam/Cini-OCR

The Giorgio Cini Foundation is a non-profit cultural instituation located in Venice, Italy. It aims to create a cultural center in the Island of San Giorgio Maggiore. As part of this goal, I worked on a semester project at EPFL (DHLAB) to automate the digitization of a large dataset of painting photos. The scans, front and back, need to be processed and then associated with their paintings and textual description. The final pipeline takes as input the front and back scans, and returns the bounding boxes of the painting, the text area and the barcode. It also recognizes each text section individually as well as their textual information using OCR.

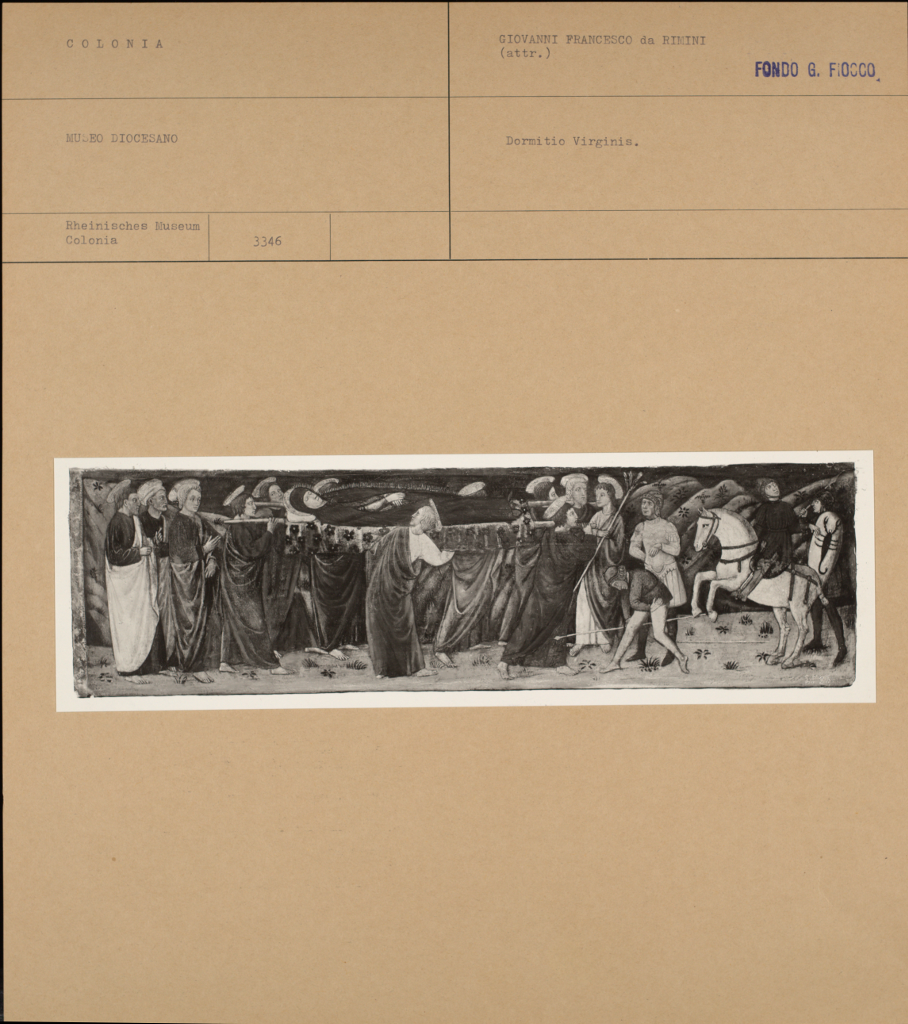

Recto scan

Using different morphology operators, gradients, and OTSU thresholding under spatial constraints, the painting and text boxes are identified and cropped from the image and re-aligned. The painting is identified as the largest cluster remaining after processing the image, while the text area is found by taking the lowest horizontal line above the painting box, located using the Hough Transform. The results from the previous image are shown below.

Painting

Text Area

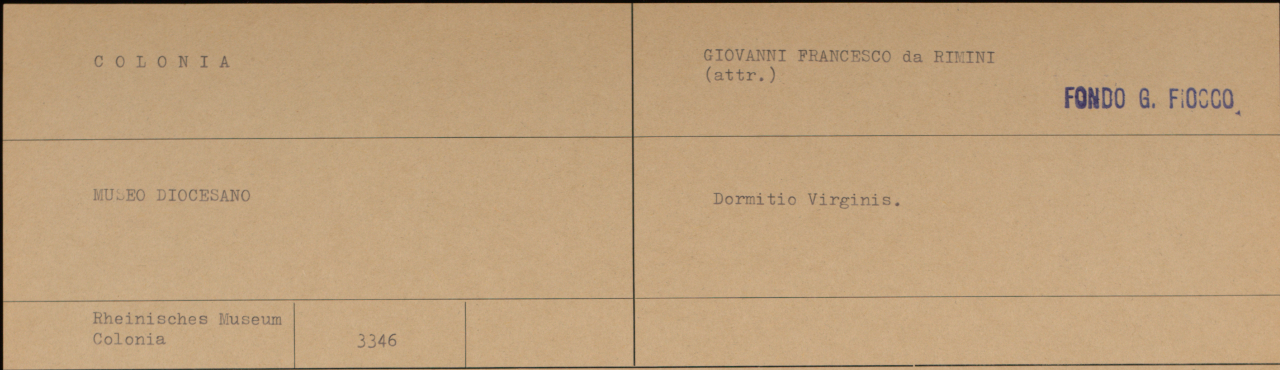

The text area is taken and processed using a layout analysis tool: Kraken. It tries to find the smallest bounding boxes in an image giving a result like this:

Text Location

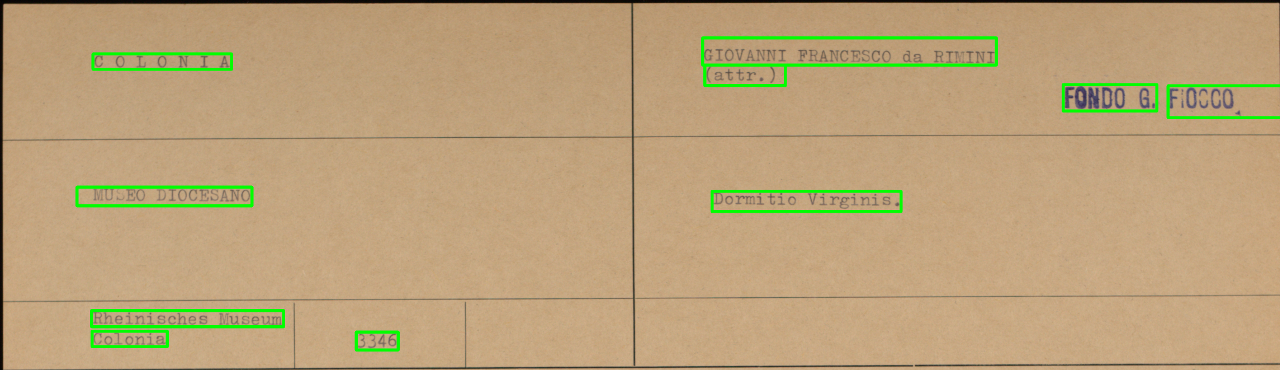

Text text area is also processed using Frangi Filters, which are able to find ridges in the image (ridges are edges between two similar regions). The result is an image segmentation separating all the parts of the text area as follows:

Layout Analysis

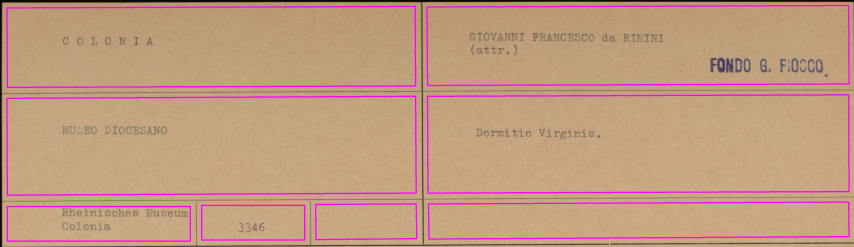

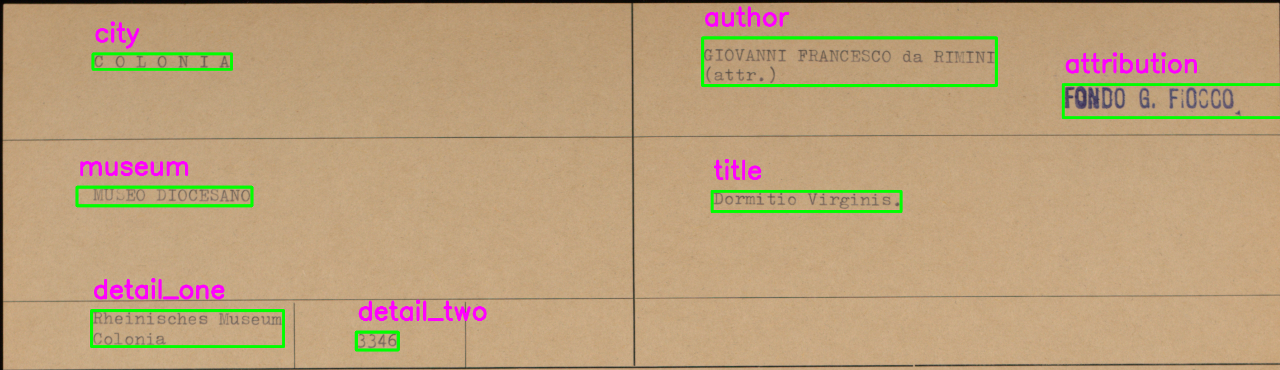

The results from both methods are merged together, and sorted vertically and horizontally in order to classify each area. For example the top-leftmost area is the City in which the painting can be found. The end result of the process is something like this:

Text Classification

After that each identified section is cropped and parsed using Tesseract OCR, and the results are stored in a json file as a set of keys and values for this image, giving a result similar to the below structure:

{

"city" : "COLONIA",

"author": "GIOVANNI FRANCESCO da RIMINI (attr.)",

"attribution": "FONDO G. FIOCCO",

"museum": "MUSEO DIOCESANO",

"title": "Dormitio Virginis.",

"details": ["Rheinisches Museum Colonia", "3346"]

}